Game & Rules

Judgement

Organizers

The BotPrize competition challenges programmers

/ researchers / hobbyists to create a bot for Unity FPS game (a

first-person shooter) that can fool opponents into thinking it is

another human player. In the competition gaming environment,

both computer-controlled bots and human players (judges) meet

in multiple rounds of combat, and the judges try to guess

which opponents are human. To win the prize, a bot has to

be indistinguishable from a human player. In other words, it has

to pass this adapted version of the Turing Test.

Introduction

A description of the competition (and tracks). Specify:

• Can an artificial agent behave in a way that is indistinguishable from a human player in an FPS game?

• The competition strongly aligns with the IEEE Conference on Games (CoG) scope, addressing multiple core topics. The competition also contributes to ongoing discussions in CoG regarding beyond-score evaluation metrics and human-centered AI evaluation.

• By using Unity, one of the most popular game engines worldwide, the competition has strong potential to attract all kind of participants. The low-cost, open, and accessible nature of the platform lowers the entry barrier compared to legacy commercial games.

• While inspired by BotPrize 2014, the competition introduces several novel elements sucha as modern, open, Unity-based FPS environment; Full control over game mechanics;…

• Research impact:Provides a reusable benchmark for human-likeness in games;Encourages alternative evaluation paradigms beyond win rates;Facilitates reproducible experimentation. Educational impact: Suitable for university courses on Game AI and AI in Games; Encourages student participation due to accessibility; Can be used for project-based learning

Rules & Dates

The proposed competition revisits and modernizes the classic BotPrize competition originally presented at IEEE CIG 2014. BotPrize introduced a novel evaluation paradigm for game AI agents, focusing not on performance or win rate, but on human-likeness, assessed through a Turing Test–inspired methodology.

In the original competition, AI-controlled bots competed against human players in Unreal Tournament 2004, and human judges voted on whether each observed player was human or machine. The main objective was to measure how convincingly artificial agents could emulate human behavior in a fast-paced

first-person shooter (FPS) environment.

In this new edition, we propose a reimplementation of the BotPrize concept using the Unity FPS Microgame, modified to support networked multiplayer gameplay and AI agent integration.

Unlike the original closed-source and legacy engine, Unity provides a modern, open-source-friendly, extensible, and widely adopted development environment, enabling a broader range of AI techniques, including learning-based, hybrid, and cognitively inspired approaches.

The core problem remains:

Can an artificial agent behave in a way that is indistinguishable from a human player in an FPS game?

——————————————————————————————————- Deadline Dates ——————————————————————————————————————————-

• Registration open till: 15th April 2026

• Submission of an approach deadline: 15th July 2026

• Evaluation phase: End July 2026

• Results announcement: During CoG 2026 competition session

• Finalists announced in advance to encourage attendance

Enjudgement | Testing

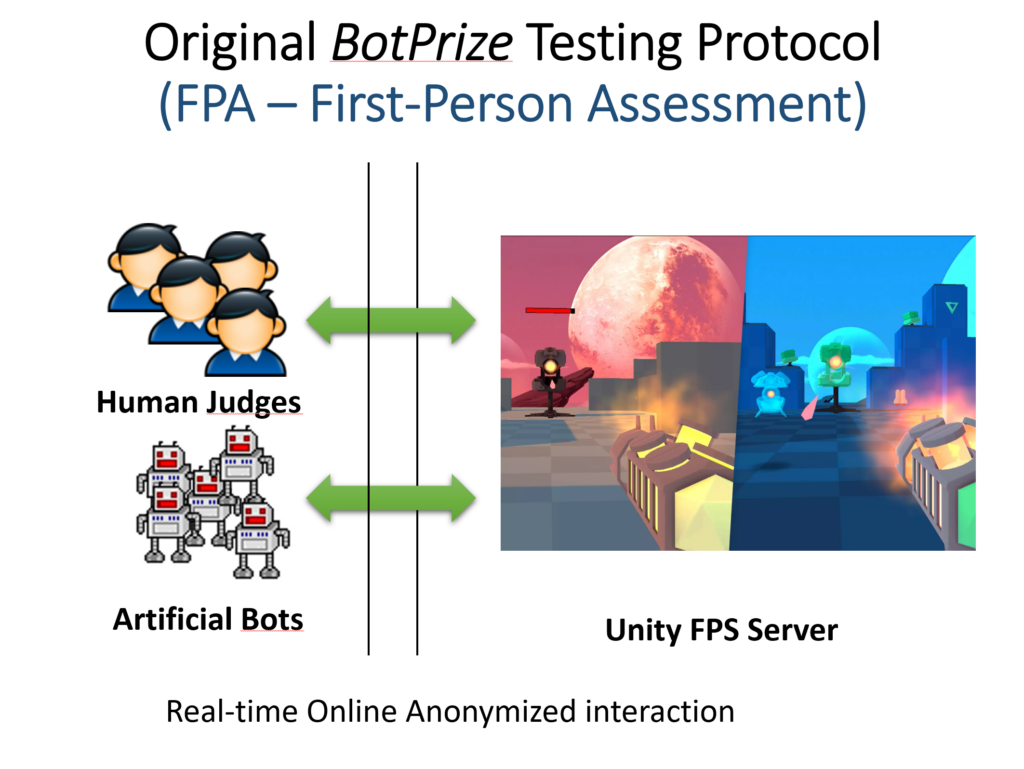

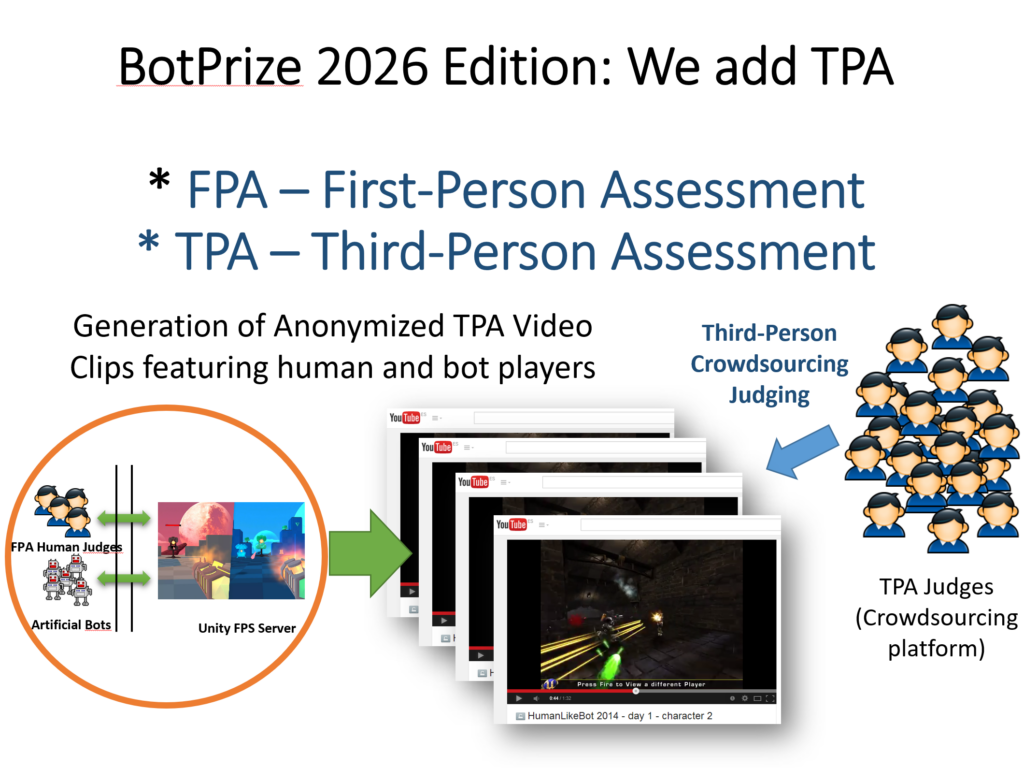

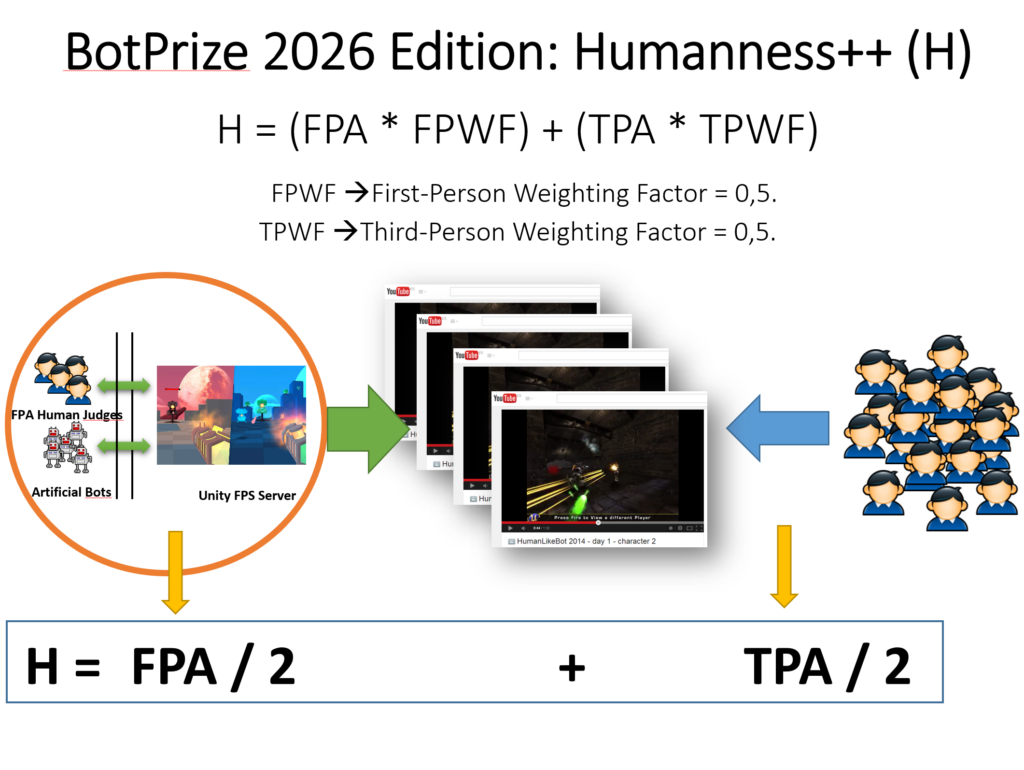

The evaluation of the submitted individuals will be conducted in two phases:

- In the first phase, on a game server, there will be a human player for each submitted bot. They will play, and the human players (judges) will have a tool to vote on whether a player is human or a bot.

- In the second phase, participants must record and share videos of their gameplay (both bots and humans), and judges will vote on which players are human and which are not.

Organizers

Juan Peralta Donate, Universitat Politècnica de València (email: jperdon@dsic.upv.es)

M.-Carmen Juan Lizandra, Universitat Politècnica de València (email:mcarmen@dsic.upv.es)